By Daljeet Virdi (cast.app, UIUC), Dileep Pasumarthi (Microsoft Bing, UIUC), and Dickey Singh.

This is an updated and edited version of the article we published here in January 2020.

Natural Language Generation from Structured Data

Over the past few years, rapid improvement in state-of-the-art (SOTA) general language models (BERT, GPT-2, GTP-3, XL-Net, etc. and BERT-forks like Albert and Ernie) from new neural architectures (LSTMs, Transformers, etc.) and different pre-training techniques (MLM, bi-directionality, etc.) has led to human-level abilities over a wide range of natural language processing tasks (question answering, translation, text to speech, etc.). Many researchers are comparing these NLP developments to have the same wide-ranging impact on language, that ImageNet had on computer vision.

In this essay, we explore a subfield of NLP, Natural Language Generation, and one technique to generate text from structured data (data-to-text synthesis). Potential applications include auto-generating news articles, weather reports, industry reports, and business insights. These pieces can be hyper-personalized by local, context, and reading style. For example, data from babies in neonatal care can be converted into text differently, with different levels of technical detail and explanatory language, depending on whether the intended reader is a doctor, a nurse, or a parent (Mahamood & Reiter, 2011).

For instance, a human journalist would not write separate reports about a sports match fr different fans. However, a machine can easily generate different sports reports for fans of the respective teams, with versions that are uniquely relevant to individual audiences. Fans would appreciate a more personally relevant report that includes not only content but tone. As an example, one team’s winning goal is likely to be considered luck from the losing team’s perspective.

In this essay, we describe an approach to creating narrative summaries from financial time series data by using new SOTA language models instead of template-based strategies. Financial news means different things to different people, based on an individual’s portfolio. This research can usher in a new class of financial news generation, moving from one news article for everyone to one news article per recipient, with direct application in wealth management, financial reporting, and investor relations.

An NLG system involves three processes:

- Content determination — what you’re going to say.

- Sentence planning — how are you going to say it.

- Surface realization — style and flow, or which specific words to use.

Developers traditionally implement these three NLG stages and assemble them in a pipeline architecture. Each piece requires codifying rules in templates and significant engineering effort. Most natural language applications have been first built in this rule-based way.

We are amidst a massive overhauling of these applications to neural-based architectures as deep learning NLP research develops. For example, up until ~2015, production-grade spell checkers were roughly 2000 lines of code. With probabilistic neural approaches, modern spell checkers can be as little as ~17 lines of code. The complexity of these systems has shrunk in some ways, less rote rule-based programming, but grown in others, as new questions arise: how accurate, precise, bias are these models?

Most modern generalized language models create coherent natural language (see: GPT-2, BERT, etc.), but practitioners have warned to avoid use in production use cases because they cannot distinguish “fact from fiction.” The text may sound natural but is often not accurate or coherent.

We ‘fine-tune’ these models, training them specifically to be precise and factual when generating text from structured data. Our approach includes two critical improvements to SOTA generalized language models:

- A copy-mechanism to select and copy content from structured data

- General language model (GPT-2, BERT, XL-NET) to compose coherent sentences.

We use the pre-trained domain-independent language model, GPT-2 in this case, but any BERT-based model will do, and train it to learn a copy-mechanism using just a few (< 200 examples) training examples.

Example – Wikipedia article + 200 samples

Let’s take an example. Wikipedia articles about people often come with a table of structured data, and the first few sentences in the article narrate that table. With just 200 Wikipedia articles about people of the millions online, we can fine-tune the general language model, GPT-2, to write sentences about people that sound like the sentences written by Wikipedia authors and score 16 BLEU points.

Using 200 lines of structured data (sample) and the first few lines as input from a wikipedia article.

charles whibley ( 1859–1930 ) was an english literary journalist and author.

we can generate the following:

rihards kuksi ( born 17 july 1988 in riga , latvia ) is a latvia scholar at a rihard kuksi university in slorida .

chris cron ( born aug 1964 ) is a former american right-handed hitter .

neil murphy ( born 19 may 1980 ) is a former english footballer who played as a central defender .

hüinsey atalay ( born 27 october 1991 ) is a turkish footballer currently playing for antalya

The style mirrors that of Wikipedia writers and surfaces interesting behaviors. For example, the model knew that because the birthplace of Chris Cron was ‘boston’, the man was ‘american’.

Example – New York Exchange data + 200 samples

We extend this work to the finance domain. We were unable to find clean structured data and summaries for finance so we built it ourselves. Luckily, since our approach is few-shot, meaning it requires less than 200 examples, this is no barrier. But as engineers, we automated even this. We took publicly available New York Stock Exchange data, aggregated and analyzed it using PySpark, extracted key metrics, and wrote templatized summaries using the realization engine, SimpleNLG.

We plugged in this training data into our model but were surprised to find it learned the template structured in less than 100 epochs. So, we tried a small hack to add more variety. We used the Datamuse API to find synonyms of words before we created sentences and randomly picked 2–5 sentences from 10–15 generated.

Sample Data: financial data

Result:

That is an average decrement of $2.17 comparative to past month! Not good.Apple had the shortest stock price with $105.44.And the average this period of time is more or less $111.13, give or take 19.24.The awaited average stock cost for Apple in the expected month is $108.96!.

After the 50th epoch:

Apple had the top stock value with $115.57.Apple Averages were 111 this quarter..Interested to know more?. Here is some Averages slue to empathize!.Apple Averages were 111 this quarter..

After the 700th epoch:

That is an average decline of $1.67 relative to past month! That is an average diminish of $1.03 compared to past month! Not good. Apple had the least stock price with $105.44.

As you can see, our hack of using synonyms got in the way and highlights why having clean training data is important. To improve these results we can use Amazon Mechanical Turk to build a small minimum viable corpus or partner with a financial service provider with internal data sets.

Additional applications

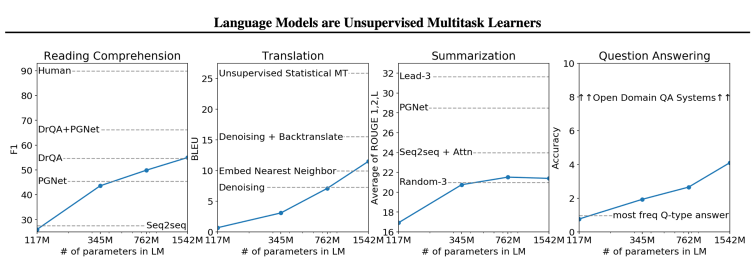

Since our approach only takes 200 examples, thanks to transfer learning, we were able to painlessly try it on many other datasets we encountered, like restaurant reviews and weather summaries. We also swapped out the 117M param GPT-2 model with the more robust 1.5B param model one, which has much stronger benchmarks, see below. We can easily continue to swap them out as better, more nuanced, and general language models become available.

The creators of GPT-2 cautioned:

large-scale language models like GPT-2 do not distinguish fact from fiction, and we don’t support use-cases that require the generated text to be true.

However, training them on structured data and teaching them, adding a copy-mechanism, and a few other modifications have considerably reduced this risk and open a wide range of applications.

Update

GPT-3 released on June 1, 2020, has 175 billion parameters. This means, that the task-agnostic models are working at human-level performance, and can be used for a number of language tasks.

References:

[1]https://www.bluewolf.com/bluewolf-now/future-retail-weather-insights-qa-ibms-paul-walsh

[2]https://www.earthnetworks.com/blog/5-key-insights-from-our-airport-weather-report-view-infographic/

[4] https://github.com/rikdz/GraphWriter

[5] https://arxiv.org/pdf/1707.08052.pdf

[6] https://arxiv.org/pdf/1706.09254.pdf

[7] https://web.stanford.edu/class/cs224n/slides/cs224n-2019-lecture15-nlg.pdf

[8] http://www.visionandlanguage.net/workshop2019/

[9] https://medium.com/@samim/generating-stories-about-images-d163ba41e4ed

[10] http://www.visionandlanguage.net/workshop2019/program.html

[11] https://arxiv.org/abs/1904.09521

[12] https://github.com/ratishsp/data2text-plan-py

[13]https://www.youtube.com/watch?v=3oEb_fFmPnY&list=PLtmWHNX-gukKocXQOkQjuVxglSDYWsSh9&index=14

[14] https://github.com/fastai/course-nlp/blob/master/7b-seq2seq-nucleus.ipynb

[15] https://github.com/jitta/Natural-Language-Generation

[16] arxiv.org/abs/2005.14165 (GPT-3)