Pervasiveness of Artificial Intelligence augmented Digital Assistants and what’s next — Tweet This

Communicating with Artificial Intelligence augmented Digital Assistants using voice and text conversational interfaces have the potential to be as revolutionary as graphical user interfaces, web browsing, search, apps and multi-touch screens.

Jarvis

Siri

Siri — circa 2011

Our devices have had Personal Assistants for years, with Apple baking the Siri app into the platform with iOS 5. Siri has roots in DARPA’s Cognitive Assistant that Learns and Organizes (CALO) and Personalized Assistant that Learns (PAL) initiative.

Siri understands natural language, uses the environment as context and helps solve everyday problems. Siri converts speech to text and then to intent based on the semantic understanding of natural language. It has trusted access to personal information, and is aware of location, time, calendars and task.

Siri initially started as a rules-based constraint satisfying system connected to 42 services that expose proprietary APIs that the Siri servers use to assist and answer questions, set up reminders, check the weather, buy tickets, etc. The services included Yelp, StubHub, Rotten Tomatoes, Wolfram Alpha, Citysearch, Gayot, Yelp, Yahoo! Local, AllMenus.com, Google Maps, BooRah, Orbitz, OpenTable and others.

Nuance Communications provided Siri’s speech recognition technology initially. Siri used Hidden Markov Models (HMM) for Automatic Speech Recognition (ASR) and guided visual and text-to-speech dialogs as the interface to users.

Siri has historically relied on:

- PAL Semantic Extraction for extracting email, addresses, URLs, locations, structured phrases, etc. from text

- Minor-Third for storing, annotating, and categorizing text as well as learning to extract entities

- MAchine Learning for LanguagE Toolkit (Mallet) for document classification, clustering, and information extraction

Siri — Mid 2014 to Now

Siri and other modern digital assistants now use neural networks extensively.

In mid-2014, Apple noticed significant improvements when they adopted enhanced versions of Hidden Markov Models (HMM), n-gram Language modeling (n-gram) and deep learning technologies to power Siri backend.

Relevant deep learning technologies Siri transitioned into include convolutional neural networks (CNN and NLP-Convonets), deep neural networks (DNN) and, recurrent neural networks (RNN) using both long short-term memory (LSTM) units and gated recurrent units (GRU).

Nuance-based speech recognition was also replaced by an internal machine-learning based voice platform with automated scheduled deployments using customized Apache Mesos called Just A Rather Very Intelligent Scheduler (Jarvis).

In WWDC 2017, Apple announced that Siri in iOS11 would have a smoother, more natural sounding text-t0-speech voice. Also, users will be able to type instead of using voice but via accessibility which disables the voice interface. Siri in iOS 11 shows multiple results and asks follow-up questions to remove ambiguity.

Update: August 23 2017 — The SIRI team on Apple Machine Learning blog wrote about the speech synthesis part (Speech to Text) of SIRI.

Instead of Hidden Markov Models (HMM), the newest approach uses a deep mixture density network (Deep MDN) to predict the distributions over the feature values. MDNs combine conventional deep neural networks (DNNs) with a Gaussian mixture models (GMM).

Read the blog here: Deep Learning for Siri’s Voice: On-device Deep Mixture Density Networks for Hybrid Unit Selection Synthesis

AI Digital Assistants

Consumer AI-Assistants

Many AI-based Personal Assistants have been introduced since Siri’s success including Microsoft’s Cortanta, Amazon’s Alexa, Google’s Assistant, Samsung’s Bixby, Viv (now Samsung), and Facebook’s M inside Messenger.

These competitive assistants want to “get things done” using conversational interfaces and are differentiated from each other in one or more ways.

- Google, for instance, supports offline speech recognition and has a lot of data to train deep learning algorithms.

- Viv can generate sequenced scripts to use of multiple stackable services for richer and complex queries. For example, at the launch demo, the question asked was: “Will it be warmer than 70 degrees near the Golden gate bridge after 5 pm the day after tomorrow?”.

- Microsoft has claimed “Human Parity” when it comes to ASR transcription.

- IBM has disputed Microsoft’s claim of Human Parity.

Non-consumer AI-Assistants

Several companies have introduced or are introducing AI-based Assistants for day-to-day enterprise tasks.

- Fuse Machines is an AI Assistant for Inside Sales Teams.

- Kasisto.com is a platform providing digital assistants for banking.

- Zoom.ai is an AI business assistant for work.

- Sudo.ai is a slack botnet that connects CRM and email.

- SkipFlag and butter.ai organize workplace knowledge.

- Chorus.ai helps understand and close more sales deals by recording, transcribing, analyzing sales calls and meetings.

- x.ai and Clara Labs are scheduling and calendaring assistants.

- Magic is an assistant that combines humans and AI to assist users over text and SMS.

- Conversica has an automated sales assistant that reaches out to leads to engage them in a human conversation and hands over to a human once a lead looks promising. Spiro.ai and Leadcrunch.ai are also focused on discovering hot leads. Colabo is a virtual sales assistant that sits on top of CRM and helps in automating sales and increasing growth.

Some companies are building platforms to enable the creation of AI-Assistants or bots.

- RobinLabs is a Conversational Natural Language Understanding (NLU) platform to build bots.

- Howdy.ai helps in creating bots. kitt.ai offers Conversational Understanding as a Service.

- Octane.ai offers marketing automation over Facebook messaging.

- Automat.ai is a personalized conversational marketing platform.

- Maluuba (now Microsoft) uses deep learning and reinforcement learning for understanding language.

- Cortical.io (based on technology from Numenta), luminoso.com, wit.ai (now FaceBook), Gridspace, and popuparchive.com are working on next generation Natural Language Understanding (NLU).

- Semantic Machines uses the Reinforcement Learning and AI to use context when understanding natural languages, to build next generation conversational interfaces.

What’s next for Digital Assistants?

Real-World Accuracy Improvements

In the lab, the Word Error Rates (WER) of Automatic Speech Recognition (ASR), has dropped to 16% when using Expectation Maximization (EM) algorithm and Gaussian mixture models (GMM) with Hidden Markov Models (HMM) but requiring significantly large training data sets.

Using a feed-forward Deep Neural Networks (DNN) with many hidden layers with Hidden Markov Models (HMM), the WER drops to 12%. See Deep Neural Networks for Acoustic Modeling in Speech Recognition (page 17 Table III).

NN-grams, a language model, unifies N-grams and Neural networks (NN) for speech recognition. The model takes as input both word histories as well as n-gram counts. Using the memorization capacity and scalability of an n-gram model with the generalization ability of neural networks, NN-grams outperform N-grams models.

Using joint training of both Convolutional and Non-convolutional neural networks, WER dropped to 10.4%. See (page 5611, Table 4).

Recently, Microsoft, IBM, and Baidu have achieved Human Parity (inline with 5% WER for Manual Speech Recognition by humans).

In the real world, such WER numbers are not yet achievable because of following reasons.

- The real word vocabulary is much larger

- More than one speaker involved in the conversation

- Continuous speech is harder to recognize

- Additional constraints imposed by task and language

- Spontaneous conversation differs from read speech

- Presence of noise and adverse conditions

WER numbers are expected to improve for real-world environments because of advancements in Deep Learning.

Deep learning is getting simpler over time and it’s is usage growing. Modern algorithms can be implemented with half the code compared to earlier efforts.

Keeping Track of multiple speakers in conversations and messages

Being able to assist a group of people in a conversation is useful both in enterprise and non-enterprise environments. Chorus.ai, for example, can listen in on conversations between sales people and customers to analyze calls.

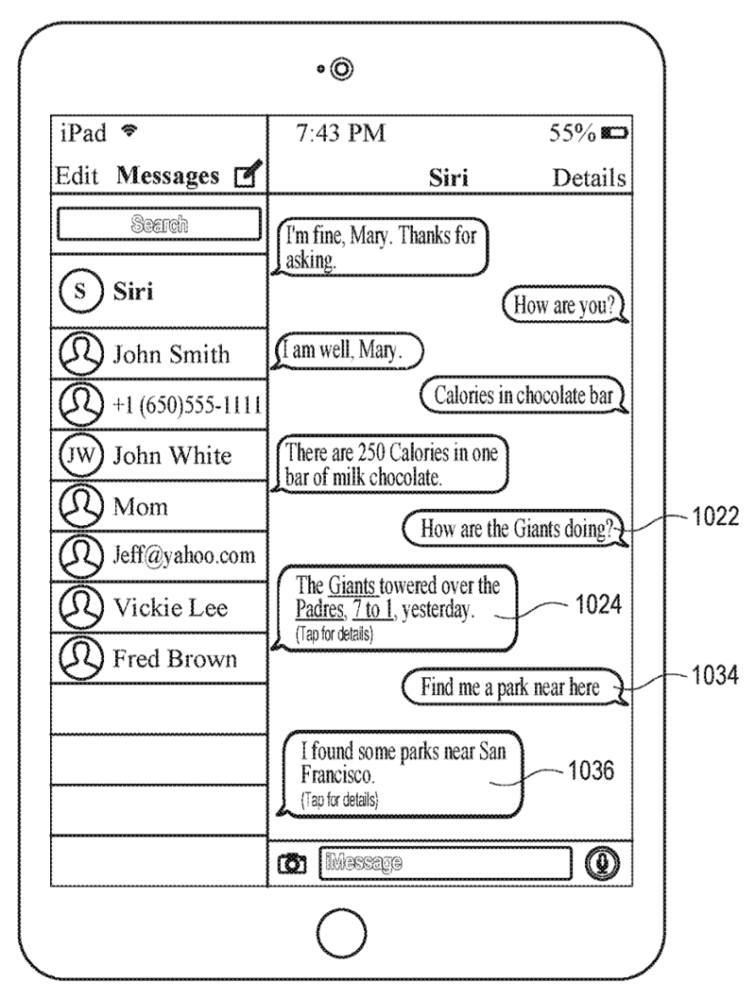

In a later version of iOS, Siri is coming to messaging environments with one or more people as indicated in the Apple Siri patent.

More Services and Standardization

Siri launched as a giant mashup of 42 services.

The more services a digital assistant can use, more helpful it becomes to the users. However, every service has its custom API, format, and schema. Services that use a vocabulary designed to support interoperability of structured data are much easier to discover and use.

Standardizing data flow between services, improves how digital assistants can use services without needing an expensive coordinator.

Instead of coding calls to various services, Viv (now Samsung) generates code or a sequence of scripts to use multiple services, allowing new services to be used as they are discovered and made available.

On-device and on-chip handling of common tasks

GPUs have revolutionized training deep learning algorithms providing energy-efficient calculations at massive scale. However, GPUs aren’t always perfect for inference or executing neural networks. App-specific integrated circuits (ASIC) processors e.g. the Google Tensor Processing Unit — TPUs, Field-programmable gated arrays (FPGA) and specialized CPUs are being developed for swiftly executing neural networks. Apple Neural Engine is expected to assist in on-device facial recognition and speech recognition.

These dedicated chips can execute a subset of speech recognition tasks directly on a smart device, instead of sending everything to the cloud.

In the future, devices can also possibly offload processing to nearby connected devices like apple HomePod Speakers, Apple TV, Access Points, to further save device battery.

Update July 24, 2017: Microsoft announced on device chip for both image and speech recognition as part of HoloLens Goggles to minimize sending data to the cloud for speech and image understanding.

Deep Links and SiriKit

Apple has opened up Siri to apps via SiriKit, which means the operating system has to perform all the coordination when using multiple services. Similar to how deep links work between apps, apps could pass intents, concept objects and action objects to other apps.

Context — Context — Context

Digital Assistants use Natural Language Understanding (NLU) along with context to help solve everyday problems.

- What you know about the user’s current environment i.e. location, time-of-day, motion status, device dock status, device connectivity type, etc.

- What you know about user from current conversations and

- What you know about user from previous conversations.

Long Short Term Memory (LSTM) networks are specialized Recurrent Neural Networks (RNNs) capable of learning and remembering information for long periods of time. RNNs are extremely effective for speech recognition.

Handle Interruptions like Humans

Humans switch topics when communicating. For example, while booking a flight, a user may switch to buying flowers for mother’s day and then switching back and continuing with booking the flight. A digital assistant should be able to handle such interuptive mini-sessions during a session.

Engaging Assistants

Apps and websites that are engaging for users have much higher usage. Similarly, Digital Assistants that engage users will be much more successful. engaging and entertaining.

Fallback interfaces

People use digital assistants to get things done. If the environment is noisy, the availability to fall back to typing or using a touch user interface is highly desirable.

As indicated above, in iOS 11 Siri is available as a text based interface under accessibility. In a later version of iOS, Siri is coming to messaging environments with one or more people as indicated in the Apple Siri patent.

Overall Accuracy and accounting for grammatical errors in questions

Siri still has a long way to go with respect to accuracy.

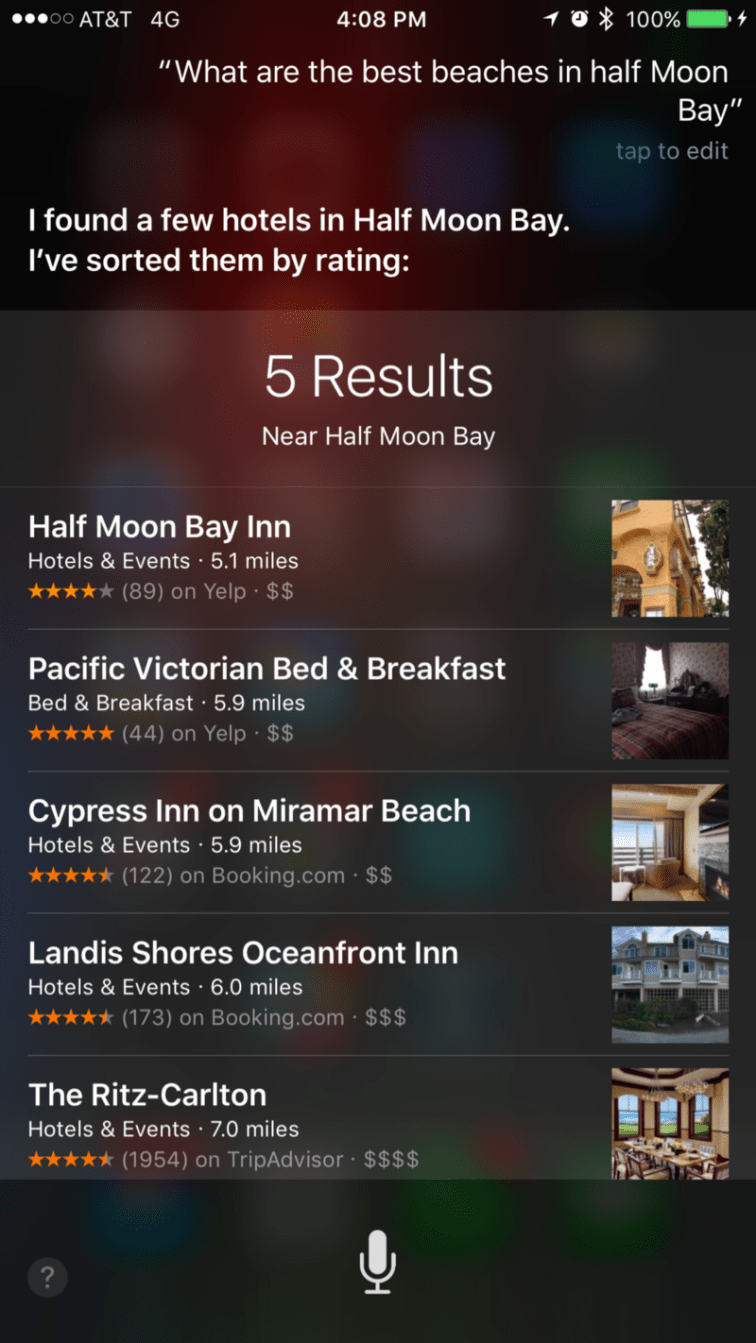

Siri did not account for a grammatically incorrect request “What are the best beaches in half Moon Bay”.

Siri interpreted the question as “Which are the best hotels in Half Moon Bay?”.

Outro

- Are digital assistants are heading towards commoditization?

- Or is there still a lot of work to be done?

- Will Voice-Only Digital Assistants succeed?

- Or will Voice become another user interface?

People want to get things done and need fallback mechanisms to accomplish tasks specially in environments where voice is not easy to use.

So until non-invasive telepathy, skin hearing and brain typing, wearable MRI becomes available, voice and touch based user interfaces will continue to improve.

Although the current implementation of artificial neural networks are quite different from the human brain, we have still a lot to learn on how the brain works. The human brain has 86 billion neurons, more than six times the neurons in a babbon. The connection density of convolutional neural networks is approximately 1/10 as neurons in a layer are connected to neurons in previous or next layer. A neuron in a human brain is connected to 100,000 other neurons based on distance, however, the connection density of human neural brain is 1/1,000,000. Deep Mind has had success with low density networks for Artificial General Intelligence (AGI).

Open Water could help accelarate the understanding of the human brain further, so we can build even better AI models.

References

PDF: Deep Learning applied to NLP

PDF: Ensemble deep learning for speech recognition

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

View at Medium.com

PDF: Densely connected convolutional neural networks (DenseNets) are more efficient